How a CTO Catches Stale Data Before It Reaches the CEO

NeonEdge is a crypto-native iGaming platform based in Tallinn, Estonia, serving approximately fifteen thousand monthly active players across multi-chain infrastructure spanning ETH, Tron, Polygon, and Solana. The platform specialises in crash games and provably fair gaming — verticals where millisecond-level transparency isn't a feature, it's the product promise — generating roughly $5M per week in gross gaming revenue across a player base that skews toward high-frequency, high-trust operators.

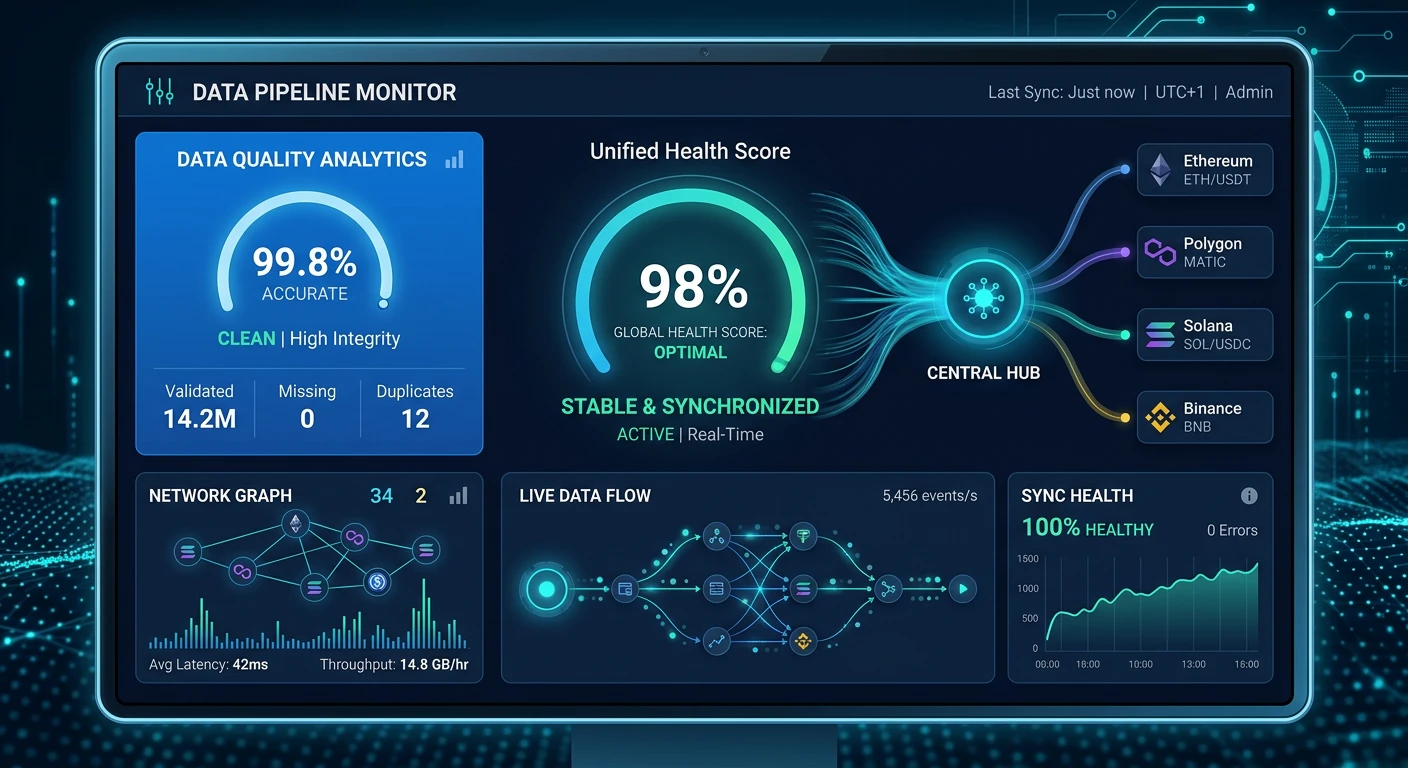

Products used: Data Pipeline Monitor, Data Quality Analytics, Sync Health Dashboard

10 minutes | end-to-end pipeline audit time

1 | stale pipeline caught before business teams started querying

1 hour | time from flag to engineering fix deployed in production

Challenge

Every Monday morning at 8am, NeonEdge's CTO Priya Desai does something most executives would consider unusual: she checks the data before she checks the business. The sequence is deliberate. In a platform where the CEO's weekly revenue report, the finance team's NGR reconciliation, and the compliance team's AML flags all originate from the same underlying data feeds, stale or corrupted data doesn't just cause confusion — it causes the wrong decisions to be made with complete confidence.

The problem Priya inherited when she joined NeonEdge eighteen months ago was architectural. The platform had grown from a single blockchain to four chains in under a year, each with its own wallet infrastructure, settlement layer, and event emission patterns. Every time the tech team added a chain or a game provider, they wired up a new ETL connector. By the time Priya arrived, there were twenty-three active connectors flowing into the data warehouse, and no single place to see whether all twenty-three were healthy, current, and consistent with each other. When a connector silently fell behind or dropped rows, nobody knew until a business analyst sent a confused Slack message asking why yesterday's crash game revenue didn't match the payment provider settlement.

"Silent data failure is the worst kind of failure in iGaming. A broken server throws an error. A broken ETL connector just quietly serves yesterday's numbers as if they're today's — and everyone downstream makes decisions on data that's twelve hours stale without knowing it."

— Priya Desai, CTO, NeonEdge

The pre-Gaming Mind workflow involved querying three separate monitoring dashboards, cross-referencing row counts manually against expected thresholds, and then chasing Slack threads to figure out whether the anomaly was a pipeline issue or genuine business movement. On a good Monday it took forty minutes. On a bad Monday — the kind where two connectors had issues simultaneously — it consumed most of the morning. Meanwhile, the business teams were already running reports on whatever data happened to be in the warehouse, stale or not.

Solution

Priya now opens Gaming Mind AI at 8am and runs a structured pipeline audit in a single conversation before anyone else on the team has sent their first Slack message. The AI surfaces connector health, data freshness, row count anomalies, and data quality trend scores across all twenty-three connectors simultaneously, then sorts the findings by severity so Priya can act on what matters rather than scroll through what doesn't.

Here's what a typical Monday morning audit looks like:

Priya: "Good morning. Give me the data freshness status across all connectors — which sources are stale?"

| Connector | Source | Last Sync | Lag | Status |

|---|---|---|---|---|

| eth-transactions | Ethereum | 08:01:14 | 1 min | 🟢 |

| tron-wallet-events | Tron | 08:00:58 | 1 min | 🟢 |

| sol-crash-rounds | Solana | 08:02:03 | 0 min | 🟢 |

| polygon-crash-events | Polygon | 03:09:22 | 287 min | 🔴 |

| eth-live-casino | Ethereum | 08:01:45 | 1 min | 🟢 |

| tron-deposits | Tron | 08:00:30 | 2 min | 🟢 |

| sol-withdrawals | Solana | 08:01:12 | 1 min | 🟢 |

| eth-sports-bets | Ethereum | 07:59:48 | 3 min | 🟢 |

| polygon-wallet-connect | Polygon | 03:11:05 | 285 min | 🟡 |

| kyc-provider | External | 08:00:10 | 2 min | 🟢 |

| payment-gateway-fiat | External | 08:01:33 | 1 min | 🟢 |

| crm-events | Internal | 08:02:15 | 0 min | 🟢 |

| bonus-engine | Internal | 08:00:44 | 2 min | 🟢 |

| risk-scoring | Internal | 08:01:58 | 1 min | 🟢 |

| aml-flags | Internal | 08:00:22 | 2 min | 🟢 |

| game-provider-a | External | 07:58:30 | 4 min | 🟢 |

| game-provider-b | External | 08:02:01 | 0 min | 🟢 |

| game-provider-c | External | 07:59:05 | 3 min | 🟢 |

| player-sessions | Internal | 08:01:49 | 1 min | 🟢 |

| leaderboard-events | Internal | 08:00:36 | 2 min | 🟢 |

| referral-tracking | Internal | 08:02:08 | 0 min | 🟢 |

| chat-support | Internal | 07:59:52 | 2 min | 🟢 |

| sol-nft-rewards | Solana | 08:01:27 | 1 min | 🟢 |

| Summary | 23 connectors | Avg lag: 13 min | 22 🟢 / 1 🔴 |

⚠️ Gaming Mind flags: The Polygon crash game event stream is stale by 4 hours 47 minutes — significantly outside the expected 5-minute sync window. This is not a brief lag spike; sustained delay of this magnitude indicates a broken or backlogged connector. 22 downstream tables are at risk if not repaired before business teams begin querying at 9am.

Twenty-two connectors are green. One is not. Gaming Mind flags the Polygon crash game event stream as stale by four hours and forty-seven minutes — significantly outside the expected five-minute sync window. The AI differentiates between brief lag spikes, which happen in any distributed system, and sustained delays of the kind that indicate a broken or backlogged connector. A four-hour-and-forty-seven-minute gap on a source that should update every five minutes is unambiguously the latter. Gaming Mind automatically surfaces the affected downstream tables and flags which Monday reports will pull incorrect data if the connector isn't repaired before the business teams arrive.

Priya: "What's the last row timestamp in that connector? How much data are we missing?"

| Metric | Value |

|---|---|

| Last ingested event timestamp | 03:09:22 Mon |

| Current time | 08:01:14 Mon |

| Total lag duration | 4 hr 51 min |

| Historical throughput | 2,400 events/hr |

| Estimated missing events | ~11,400 crash game events |

| Estimated missing wager volume | ~$180,000 |

| Connector expected sync window | Every 5 min |

| Lag onset (slowdown began) | ~01:00 Mon |

7-Day Lag Trend

| Day | Max Lag (min) | Incidents | Self-Resolved? |

|---|---|---|---|

| Mon (last week) | 4 | 0 | — |

| Tue | 3 | 0 | — |

| Wed | 7 | 0 | — |

| Thu | 5 | 0 | — |

| Fri | 6 | 0 | — |

| Sat | 118 | 1 | Yes (2 hr) |

| Sun | 2 | 0 | — |

| Mon (today) | 291+ | 1 (ongoing) | No |

⚠️ Gaming Mind flags: Approximately 11,400 missing crash game events representing ~$180K in unrecorded wagers. Lag onset at 1am points to a Polygon network congestion event rather than a NeonEdge infrastructure fault — but at 4h 51m this is outside the self-resolution window seen in prior incidents. Engineering intervention required.

The last successfully ingested event was timestamped at 3:09am. Gaming Mind estimates the gap at approximately 11,400 missing crash game events, based on the connector's historical throughput of 2,400 events per hour. That translates to roughly $180K in unrecorded wagers that haven't yet landed in the warehouse — not lost GGR, since the blockchain itself is immutable, but missing from every internal report until the backfill completes. More usefully, Gaming Mind shows the lag trend across the past seven days: the connector started slowing at 1am, which points toward a Polygon network congestion event rather than a NeonEdge infrastructure fault.

Priya: "Has this connector had issues before? Is this a recurring pattern?"

| Incident | Date | Lag Onset | Peak Lag | Duration | Self-Resolved | Root Cause |

|---|---|---|---|---|---|---|

| #1 | 38 days ago | 01:14 | 94 min | 1 hr 52 min | Yes | Polygon network congestion |

| #2 | 19 days ago | 00:47 | 112 min | 1 hr 58 min | Yes | Polygon network congestion |

| #3 | 8 days ago | 01:03 | 127 min | 1 hr 41 min | Yes | Polygon network congestion |

| #4 (today) | Today | 01:00 | 291+ min | Ongoing | No | Polygon network congestion (extended) |

Pattern Summary

| Metric | Prior 3 Incidents | Today |

|---|---|---|

| Lag onset time | 00:47–01:14 | 01:00 |

| Peak lag | 94–127 min | 291+ min |

| Avg self-resolution time | 1 hr 50 min | Not resolved |

| Root cause | Polygon congestion | Polygon congestion |

| Engineering intervention needed | No | Yes |

⚠️ Gaming Mind flags: Three prior incidents in 90 days share the same signature — lag spikes between midnight and 2am correlated with Polygon mainnet congestion. All three self-resolved within 2 hours. Today's incident is nearly 5 hours old and is not healing. Same root cause, but requires engineering intervention. Full historical context available for incident description without digging through logs.

Three prior incidents in ninety days, all sharing the same signature: lag spikes beginning between midnight and 2am, correlated with high-congestion periods on the Polygon mainnet. The previous two resolved themselves within two hours as congestion cleared. This one is longer — nearly five hours at the point Priya is looking at it — which Gaming Mind notes is outside the self-resolution window for past incidents. It's the same root cause, but it's not healing on its own. The engineering team needs to intervene. Priya now has the full historical context she needs to write a precise incident description without anyone having to dig through logs at 8am.

Priya: "What are the downstream report impacts if I don't fix this before 9am?"

| Report | Scheduled Run | Consuming Team | Data Dependency | GGR Impact | Priority |

|---|---|---|---|---|---|

| CEO Weekly Revenue Summary | 09:15 Mon | Executive | polygon-crash-events | ~$180K undercount | 🔴 P1 |

| Finance NGR Reconciliation | 09:30 Mon | Finance | polygon-crash-events, polygon-wallet-connect | ~$180K undercount | 🔴 P1 |

| AML Monitoring Dashboard | Refreshes every 4 hr (next: 10:00) | Compliance | polygon-crash-events | Early-morning window missed | 🟡 P2 |

| Player Activity Report | 10:00 Mon | Product | polygon-crash-events | Crash round counts understated | 🟡 P2 |

| Affiliate GGR Attribution | 11:00 Mon | Marketing | polygon-crash-events | Partner revenue understated | 🟡 P2 |

| Risk Scoring Refresh | Continuous | Risk | polygon-wallet-connect | Wallet activity gap | 🟢 P3 |

Recommended Actions Before 09:15

| Action | Owner | Deadline |

|---|---|---|

| Restart Polygon ingestion job | On-call infra engineer | 08:30 |

| Trigger backfill from 03:09 | On-call infra engineer | 08:30 |

| Block CEO report until lag < 5 min | Priya | 08:20 |

| Notify Finance of NGR delay | Priya | 08:20 |

⚠️ Gaming Mind flags: The CEO's Monday revenue summary pulls at 09:15am and the Finance NGR reconciliation at 09:30am — both will understate Polygon crash GGR by approximately $180K if the connector is not repaired first. The CEO report is the highest-priority dependency. Recommend blocking it from running until backfill is confirmed current within a 5-minute lag threshold.

Gaming Mind maps the dependency chain. The CEO's Monday revenue summary pulls from a scheduled query at 9:15am. The finance team's NGR reconciliation runs at 9:30am. Both will draw on the warehouse in its current state, which means Polygon crash GGR will be understated by approximately $180K. The compliance team's AML monitoring dashboard, which refreshes every four hours, will also miss the early-morning Polygon activity window. Gaming Mind ranks the CEO report as the highest-priority dependency — an incorrect revenue number at a Monday leadership standup is exactly the kind of error that creates downstream confusion for an entire week. Priya makes a note: block the CEO report from running until the backfill is confirmed complete.

Priya: "Show me the overall data quality score trends for this week versus last week."

| Dimension | Week Prior | This Week | Change | Status |

|---|---|---|---|---|

| Completeness | 99.0% | 99.1% | +0.1pp | 🟢 Stable |

| Consistency | 97.8% | 97.9% | +0.1pp | 🟢 Stable |

| Validity | 98.4% | 98.9% | +0.5pp | 🟢 Improving |

| Timeliness | 99.2% | 96.7% | -2.5pp | 🔴 Incident-driven |

Timeliness Breakdown (This Week)

| Connector Group | Timeliness Score | Driver |

|---|---|---|

| ETH connectors (7) | 99.6% | Normal |

| Tron connectors (4) | 99.4% | Normal |

| Solana connectors (4) | 99.7% | Normal |

| Polygon connectors (2) | 47.1% | Today's lag incident |

| Internal/external (6) | 99.8% | Normal |

| All connectors (23) | 96.7% | Polygon incident |

⚠️ Gaming Mind flags: Three of four quality dimensions are stable or improving. Validity scores improved from 98.4% to 98.9% following the SOL transaction parser patch two weeks ago. The timeliness dip is entirely driven by today's Polygon incident — this is a point-in-time anomaly, not a degrading structural trend. Core data quality continues to improve.

Three of the four quality dimensions are stable or improving. Completeness held at 99.1% across all non-Polygon connectors. Validity scores — meaning records that pass schema and business-rule checks — actually improved from 98.4% to 98.9% since Priya's team patched a known edge case in the SOL transaction parser two weeks ago. Timeliness is the outlier, dragged down by this morning's Polygon incident. Gaming Mind separates the incident-driven timeliness dip from the underlying structural trend, making it clear this is a point-in-time anomaly rather than a degrading pattern. For a platform built on provably fair gaming, where data integrity is literally a product promise, that distinction matters.

Priya: "Are there any row count anomalies on the other connectors — anything that looks off even if it's technically syncing on time?"

| Connector | Expected Rows (04:00–06:00) | Actual Rows | Variance | Z-Score | Status |

|---|---|---|---|---|---|

| eth-transactions | 18,400 | 18,210 | -1.0% | 0.4 | 🟢 Normal |

| eth-live-casino | 9,200 | 6,348 | -31.0% | 2.4 | 🟡 Flagged |

| tron-wallet-events | 6,800 | 6,755 | -0.7% | 0.2 | 🟢 Normal |

| tron-deposits | 3,100 | 3,088 | -0.4% | 0.1 | 🟢 Normal |

| sol-crash-rounds | 11,200 | 11,143 | -0.5% | 0.3 | 🟢 Normal |

| sol-withdrawals | 2,400 | 2,391 | -0.4% | 0.2 | 🟢 Normal |

| polygon-crash-events | 4,800 | 0 | -100% | — | 🔴 Stale |

| game-provider-a | 7,300 | 7,284 | -0.2% | 0.1 | 🟢 Normal |

| game-provider-b | 5,600 | 5,572 | -0.5% | 0.2 | 🟢 Normal |

| player-sessions | 14,200 | 14,188 | -0.1% | 0.0 | 🟢 Normal |

| (13 others) | varies | within range | < ±2% | < 1.0 | 🟢 Normal |

Annotation — ETH Live Casino Flag

| Detail | Value |

|---|---|

| Expected shortfall | 2,852 rows (-31%) |

| Z-score | 2.4 (threshold: > 2.0) |

| Supplier maintenance notice received | Friday (pre-announced) |

| Action required | None — log against maintenance window |

| Finance team notification needed | Yes (proactive) |

⚠️ Gaming Mind flags: 21 of 23 connectors are within normal range. The ETH live casino connector ingested 31% fewer rows than expected between 04:00–06:00, sitting at 2.4 standard deviations below the 12-Monday baseline. However, the ETH live casino supplier issued a scheduled maintenance notice on Friday — this volume drop is expected and does not require an engineering ticket. Proactively notify Finance to prevent a reconciliation query later.

Twenty-one connectors are within normal range. One flag: the ETH live casino connector ingested 31% fewer rows than expected between 4am and 6am this morning. Gaming Mind's anomaly model compares against the same two-hour window across the prior twelve Mondays and flags anything beyond two standard deviations. A 31% drop sits at 2.4 standard deviations — worth investigating, though the connector itself is live and syncing. Priya checks the annotation Gaming Mind surfaces automatically: the ETH live casino supplier sent a scheduled maintenance notice Friday. The lower row count is expected. This one doesn't need an engineering ticket; it needs to be noted so the finance team doesn't raise a query about it later.

Priya: "Give me the remediation priority list. What do I tell the engineering team?"

| Priority | Incident | Severity | Reports at Risk | Recommended Action | Time-to-Impact |

|---|---|---|---|---|---|

| P1 | Polygon crash-events connector stale (287 min) | 🔴 Critical | CEO Revenue Summary (09:15), Finance NGR (09:30), AML Dashboard | Restart ingestion job; trigger backfill from 03:09; hold CEO report until lag < 5 min | 74 min to CEO report |

| Informational | ETH live casino row count low (-31%, 04:00–06:00) | 🟡 Informational | Finance NGR Reconciliation | Log against supplier maintenance window; notify Finance proactively | None — pre-announced |

P1 — Polygon Connector: Recommended Steps

| Step | Action | Owner |

|---|---|---|

| 1 | Restart Polygon ingestion job (connector ID: polygon-crash-events) | On-call infra |

| 2 | Trigger backfill from timestamp 03:09:22 | On-call infra |

| 3 | Monitor lag every 5 min until < 5 min threshold confirmed | On-call infra |

| 4 | Block CEO revenue report (scheduled 09:15) until backfill confirmed current | Priya |

| 5 | Notify Finance team of NGR reconciliation delay | Priya |

⚠️ Gaming Mind flags: One P1 action required — Polygon connector restart and backfill. The ETH live casino anomaly is informational only; log it and communicate proactively to Finance. No other connectors require action. Paste the P1 section into Slack to the on-call infrastructure engineer with the incident history attached.

Gaming Mind produces a ranked action list: the Polygon connector is P1 — restart the ingestion job, trigger a backfill from 3:09am, and hold the 9:15am CEO report until data confirms current within a five-minute lag threshold. The ETH live casino volume dip is classified as informational — log it against the maintenance window and communicate to the finance team proactively so it doesn't surface as an unexplained anomaly in the NGR reconciliation. No other connectors require action. Priya pastes the Polygon section directly into a Slack message to the on-call infrastructure engineer, includes the incident history Gaming Mind surfaced, and marks the CEO report as blocked in the internal reporting system.

Results

Stale data intercepted before the CEO saw incorrect numbers

The engineering team received Priya's Slack message at 8:14am with a full incident description: connector ID, failure timestamp, estimated missing row count, historical precedent, and the exact reports at risk. The ingestion job was restarted by 8:40am and the backfill completed at 9:11am — four minutes before the CEO's revenue report was scheduled to run. The report pulled clean, current data. Marcus never saw an incorrect number.

Root cause identified without a single log query

In previous incidents, diagnosing whether a pipeline failure was caused by internal infrastructure or an upstream chain event required an engineer to manually correlate internal metrics with Polygon network status feeds — a thirty-to-forty-five-minute exercise. Gaming Mind surfaced the congestion correlation automatically by comparing the connector's lag onset timestamp with historical Polygon throughput data. The on-call engineer confirmed the root cause in under five minutes.

False alarm resolved before it became a ticket

The ETH live casino row count anomaly, which would previously have appeared as an unexplained discrepancy in the finance team's Monday reconciliation, was matched to the supplier maintenance window before anyone queried it. Priya sent a one-line Slack note to the finance lead at 8:20am: expected volume drop on ETH live casino, maintenance window, no action needed. Zero tickets raised, zero time spent investigating.

Data quality trend separated from incident noise

By distinguishing the Polygon timeliness incident from the underlying quality trend, Gaming Mind gave Priya the data she needed to make a clear architectural argument: NeonEdge's core data quality is improving, but the Polygon connector's recurring congestion sensitivity is a structural risk that warrants a circuit-breaker pattern. Priya added that recommendation to the next sprint planning session.

"I used to start every Monday not knowing whether the data the business was about to rely on was actually trustworthy. Now I know by 8:15am — which means when the CEO opens his revenue summary at 9:15, I've already guaranteed it's correct. That's the job. Everything else is secondary."

— Priya Desai, CTO, NeonEdge

Read in another language

Want to see how Gaming Mind AI can help your operation?

Get a Demo